Pydantic Deep Agents vs LangChain Deep Agents: Which Python AI Agent Framework Should You Choose in 2026?

Deep agents run autonomously for minutes or hours, planning work, editing files, spawning sub-agents, and managing their own context. Think Claude Code, Cursor, or Devin. Right now, there are two open-source Python frameworks that do this: LangChain Deep Agents (built on LangGraph) and Pydantic Deep Agents (built on Pydantic AI).

We actively maintain Pydantic Deep Agents and have read every line of LangChain’s implementation. This is an honest comparison: what each does well, where each falls short, and which you should choose for your use case.

What are Deep Agents?

A regular AI agent calls a tool, gets a result, responds while the LLM directly drives each step. A deep agent still uses tool calls, but it runs inside a persistent runtime that plans, manages state, executes tools repeatedly, and coordinates long-running tasks autonomously. Think Claude Code and Devin: they do not just react to one request, they break down complex goals, maintain context across dozens of steps, and self-correct when things go wrong.

Six capabilities make this possible:

- Planning – Breaking a vague request into concrete subtasks with dependencies.

- Filesystem – Reading, writing, editing, searching. Real grep, glob, pagination, multi-file editing.

- Shell execution – Running commands in sandboxed environments. Build, test, lint, deploy.

- Sub-agent delegation – Spawning specialized workers for parallel tasks.

- Context management – When a conversation outgrows the model’s context window, compress intelligently instead of crashing.

- Lifecycle hooks – Intercept every tool call for safety checks, audit logging, cost tracking, or custom logic.

Both frameworks implement all six. The question is how, and the architectural choices create real trade-offs.

Getting started

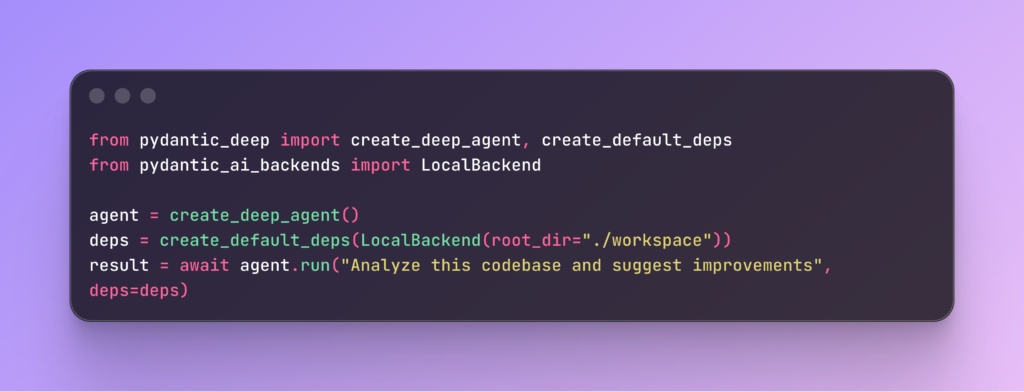

Both frameworks give you a working deep agent in three lines:

Pydantic Deep Agents:

from pydantic_deep import create_deep_agent, create_default_deps

from pydantic_ai_backends import LocalBackend

agent = create_deep_agent()

deps = create_default_deps(LocalBackend(root_dir="./workspace"))

result = await agent.run("Analyze this codebase and suggest improvements", deps=deps)LangChain Deep Agents:

from deepagents import create_deep_agent

agent = create_deep_agent()

result = agent.invoke({

"messages": [{"role": "user", "content": "Analyze this codebase and suggest improvements"}]

})Both give you planning, filesystem tools, shell execution, sub-agents, and context management out of the box.

How they work under the hood

LangChain Deep Agents layer 8 middleware types onto a LangGraph agent. Each middleware hooks into the lifecycle (before_agent, wrap_model_call, before_tools, after_tools). The result is a compiled state graph with native streaming and persistence. The big payoff: LangGraph Studio lets you visually debug agent states in real-time.

Pydantic Deep Agents compose standalone toolsets on a Pydantic AI agent. Each capability is a separate PyPI package: pydantic-ai-backend for filesystem, pydantic-ai-todo for planning, subagents-pydantic-ai for delegation. The big payoff: you pick what you need. Need just a sandbox for your existing agent? pip install pydantic-ai-backend, done.

Feature comparison

| Capability | Pydantic Deep Agents | LangChain Deep Agents |

| Planning |

|

|

| Filesystem |

| FilesystemMiddleware (same ops) |

| File editing | Hashline (+5 to +64pp accuracy) + |

|

| Shell execution | 4 backends (Docker, Local, State, Composite) |

|

| Sub-agents | sync, async, background, Q&A, nested spawning |

|

| Multi-agent teams | SharedTodoList, TeamMessageBus, AgentTeam | — |

| Context management | LLM summary + sliding window + eviction | Concurrent summarization + history offload |

| Middleware | 7 hooks, chains, parallel, guardrails | 7 middleware types (before/wrap/after) |

| Cost tracking | USD budget enforcement, on by default | Token counting in status bar |

| Checkpointing | Save/rewind/fork with FileCheckpointStore | LangGraph native checkpointer |

| Permissions | 4 presets (DEFAULT, PERMISSIVE, READONLY, STRICT) | Per-tool HITL (approve, reject, auto-approve) |

| Visual debugging | Logfire | LangGraph Studio |

| Remote sandboxes | Docker, Local, Daytona | Modal, Runloop, Daytona |

| Editor integration | — | ACP (Zed, with plan panel + HITL) |

| Benchmarks | — | Harbor (Terminal-Bench 2.0, ATIF logging) |

| Type safety | Pydantic models end-to-end | LangChain typing |

What LangChain Deep Agents does better

IDE integration with Agent Client Protocol

ACP lets your AI agent work directly inside your IDE. The Zed editor already supports it: the agent streams responses into your editor, updates a live plan panel in the sidebar, and shows approval dialogs for shell commands. Pydantic Deep Agents has no editor integration as of today.

Cloud Sandboxes and Benchmarking

LangChain supports Modal, Runloop, and Daytona for cloud sandboxes. It also ships Harbor, a built-in evaluation framework for benchmarking agents against Terminal-Bench 2.0. Pydantic Deep Agents supports Docker, local backends, and Daytona, but does not have Modal/Runloop or built-in benchmarks yet.

What Pydantic Deep Agents does better

Hashline: +64pp more accurate file editing

Standard LLM file editing uses str_replace: the model reproduces the exact string to find, then the replacement. This breaks constantly. Whitespace errors, indentation mismatches, partial matches. In our production workloads, string replacement failed about 30% of the time on smaller models.

Hashline takes a different approach. When the agent reads a file, each line gets a 2-character content hash:

1:a3|function hello() {

2:f1| return "world";

3:0e|}Instead of “find this exact string and replace it,” we say “edit lines 1:a3 through 3:0e.” The model references lines by number and hash, not by reproducing content. Fewer output tokens, no ambiguity, and if the file changed between read and edit, the hash check catches it.

These findings were based on Can Boluk’s hashline research and benchmarked across 16 models: +5 to +64 percentage points improvement vs str_replace. With biggest gains on smaller models where exact string reproduction is hardest.

Multi-Agent teams

Most frameworks support sub-agent delegation: spawn one agent, wait for it to finish. But sub-agents are isolated workers. They do not know about each other.

Pydantic Deep Agents adds Agent Teams: multiple agents working together with shared state. SharedTodoList is an asyncio.Lock-backed task list that agents claim from concurrently, with dependency tracking. TeamMessageBus provides per-agent message queues for peer-to-peer communication. AgentTeam ties it together with spawn, assign, check, message, and dissolve tools.

There is no central orchestrator deciding who does what. Instead, agents coordinate through a shared loop and shared state, claiming tasks and messaging each other as needed.

Checkpoint rewind and fork

Long-running agents fail. If the model makes a wrong decision at step 40 of a 60-step task, with most frameworks, you start over. With checkpointing, you rewind to step 39 and try again.

Both frameworks support checkpointing. LangGraph’s checkpointer is battle-tested and saves state at every graph node. But in Pydantic Deep Agents, the agent itself can call rewind and fork as tools. LangChain Deep Agents does not expose this. The difference matters: the agent can save a labeled checkpoint before a risky operation, and if it fails, rewind autonomously without human intervention.

Three save frequencies: every_tool (default), every_turn, manual_only. Auto-pruning keeps the last 20 checkpoints. Fork creates a new session from a past checkpoint, letting you explore different approaches from the same starting point. None of these are available as agent-callable tools in LangChain Deep Agents.

6 Independent PyPI Packages

Each capability is a standalone package:

| Package | What it does |

|

| File storage, Docker sandbox, permissions, hashline |

|

| Task tracking, subtasks, dependencies |

|

| Delegation, background tasks, Q&A |

|

| Context compression |

|

| 7 hooks, chains, guardrails |

|

| Everything combined |

pip install pydantic-deep installs everything. But if you only need middleware for an existing agent, pip install pydantic-ai-middleware is 100KB with no extra dependencies.

LangChain Deep Agents is a single package. Simpler to install, but you cannot use a single piece without the whole framework.

Cost tracking and permission presets

Cost tracking is on by default. Set a USD budget, and when it is exceeded, the next model call raises BudgetExceededError before making an API request. Without budget limits, an agent stuck in a retry loop can silently rack up API costs, a common issue in long-running autonomous workflows.

Four permission presets (DEFAULT, PERMISSIVE, READONLY, STRICT) control what the agent can do without asking. Custom glob rules, built-in secret detection (.env, credentials, keys), system file protection.

Decision Guide

| Your situation | Pick | Why |

| Already on LangGraph | LangChain | Zero migration, native integration |

| Need visual state debugging | LangChain | LangGraph Studio has no equivalent |

| Need Modal/Runloop sandboxes | LangChain | Built-in partner packages |

| Need IDE integration | LangChain | ACP with Zed |

| Already on Pydantic AI | Pydantic | Native toolset integration |

| Need multi-agent teams | Pydantic | SharedTodoList, MessageBus, AgentTeam |

| Need accurate file editing | Pydantic | Hashline (+5 to +64pp on 16 models) |

| Need standalone components | Pydantic | 6 PyPI packages, each works alone |

| Need USD budget enforcement | Pydantic | Built-in, on by default |

| Need checkpoint rewind/fork | Pydantic | Agent-callable rewind, session forking |

| Starting fresh | Try both | Same pip install, same effort |

Known Limitations

Pydantic Deep Agents: No visual debugger, no editor integration, no benchmark framework, smaller community.

LangChain Deep Agents: No hashline editing, no agent teams, no budget enforcement, no rewind/fork, components aren’t individually installable.

Start Building

Pydantic Deep Agents: GitHub | Docs LangChain Deep Agents: GitHub | Docs

Both are open-source. Both install in one command. Star whichever you use.

Vstorm builds production AI agent systems and open-sources the tooling

We maintain Pydantic Deep Agents and its 6 component libraries. For architecture discussions, please reach out

Summirize with AI

The LLM Book

The LLM Book explores the world of Artificial Intelligence and Large Language Models, examining their capabilities, technology, and adaptation.